Advanced Reinforcement Learning for Safe and Adaptive Optimization in Complex Industrial Systems

Theory: Digital Twin, Reinforcement Learning, Operations Research

Application: Smart Manufacturing, Industrial Automation

Funding Source: National key R&D project

Background and Motivation

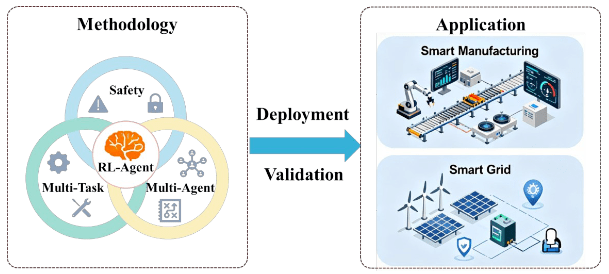

Research Topic: Modern industrial systems, from smart grids to smart manufacturing, are becoming increasingly complex and dynamic. Traditional optimization methods struggle to cope with the inherent uncertainties and interdependencies within these systems. This research is about developing a new generation of Reinforcement Learning (RL) methodologies to create robust, adaptive, and safe decision-making “brains” for these complex systems. The core focus is on advancing RL theory itself, rather than solving a single application-specific problem.

Research significance: The originality of this work lies in tackling three fundamental and intertwined challenges that currently hinder the real-world deployment of RL: ensuring operational safety, enabling effective multi-agent coordination, and achieving rapid adaptation to new tasks (generalization). While most research addresses these issues in isolation, we aim to develop a unified methodological framework. This is significant because creating such a holistic solution is the key to unlocking the true potential of AI in industrial automation, moving from task-specific solutions to genuinely intelligent, system-wide optimization.

Research importance: The potential importance is twofold. Academically, this research will contribute novel algorithms and theoretical insights to the core fields of Safe, Multi-Agent, and Meta-Reinforcement Learning. Practically, the developed methodologies will be highly transferable, creating a versatile “optimization engine” applicable to a wide range of critical domains. By validating our framework on the challenging case of battery disassembly line management, we will provide a powerful blueprint for the design of next-generation intelligent industrial systems.

Objective and aims

1. To develop a robust Safe Reinforcement Learning framework for decision-making in systems with critical and dynamic operational constraints, extending prior work on constrained optimization to handle large-scale industrial process scheduling.

2. To design a scalable Multi-Agent Reinforcement Learning algorithm for decentralized process coordination and resource management, focusing on credit assignment and emergent collaborative strategies in industrial robotics.

3. To investigate a Multi-Task and Meta-Reinforcement Learning approach to enable the rapid adaptation of system-wide operational strategies to novel scenarios (e.g., different product models, changing market demands) with minimal retraining.attery health assessment.

Scientific Problems

1. Safety & Efficiency Dilemma: How can RL agents learn optimal policies that maximize long-term rewards (e.g., throughput, profit) while guaranteeing 100% satisfaction of hard safety/operational constraints during the entire learning process, not just after convergence?

2. Decentralized Coordination in Complex Systems: How can multiple, self-interested agents learn to cooperate to achieve a global objective in a non-stationary environment (where other agents’ policies are constantly changing) with only partial system observability?

3. The Generalization Gap: How to design an RL framework that learns underlying principles of system management, rather than memorizing specific policies, to avoid catastrophic forgetting and enable robust “zero-shot” or “few-shot” adaptation to fundamentally new tasks? This is a major bottleneck for real-world industrial deployment.